Hi Folks,

Wondering how you can enable Copilot in Dynamics 365 Model Driven App …? Then you come to the right place, few days ago, I was trying to use it few days back but couldn’t. Hence this blog post is from my experience.

There were few things to configure for your Copilot to respond to your queries. So, I will be taking about that in this blog post today. Let’s get started…

Copilot in model-driven Power Apps was in Preview since July 2023.

Prerequisite: You must have a non-production environment with Dataverse database, apps, and data.

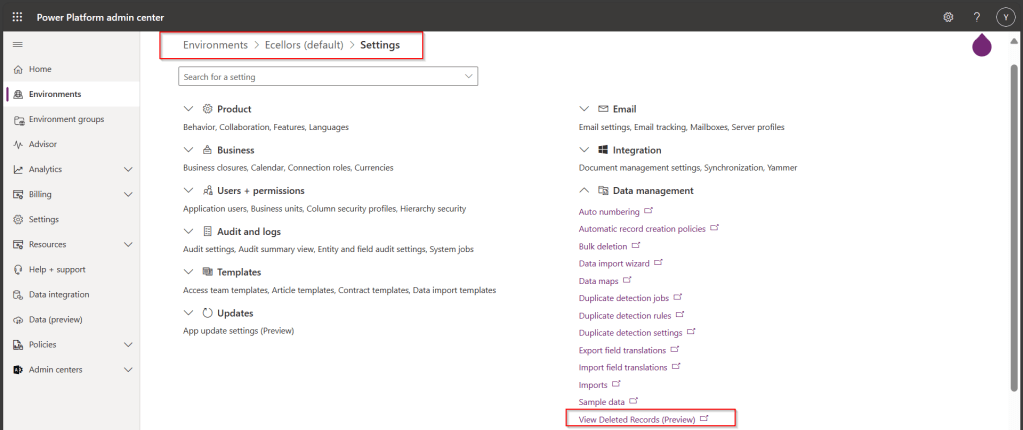

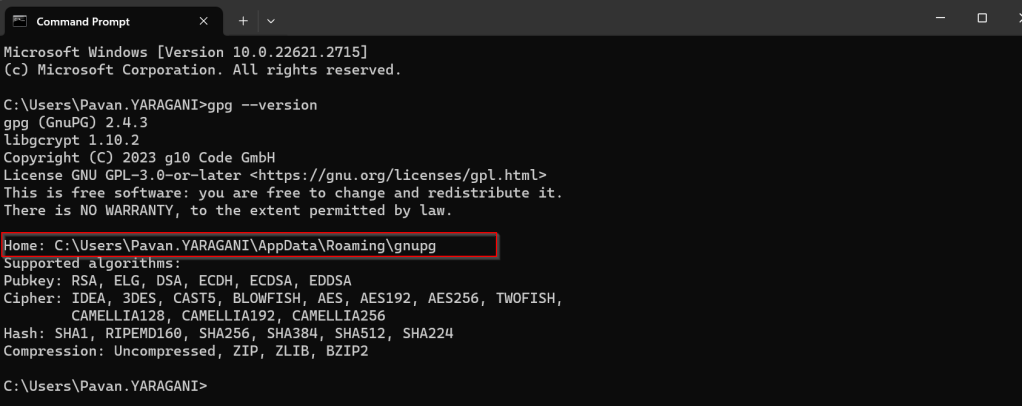

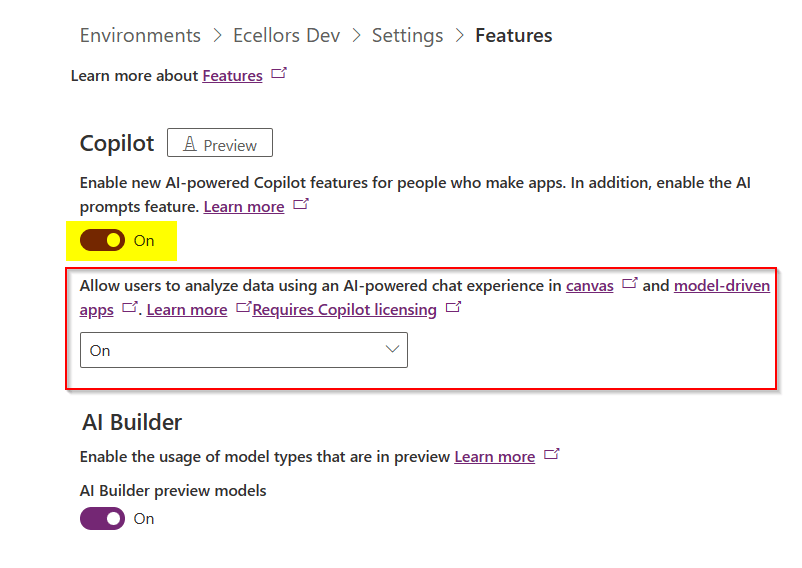

Step 1: Go to Power Platform Admin Center –> Select the environment –> Settings –> Product –> Features –> Select On for AI-powered experience as highlighted below, if you were App maker and want to try it for yourself, you would also need to check the option in yellow below.

Step 2: Go to Power Platform Admin Center –> Select the environment –> Settings –> Product –> Behaviour –> Select Monthly channel or Auto for Model-driven app release channel option and click save.

Step 3: Well, this step is important, in this task, we configure a Dataverse table and columns for Copilot.

Go to Power Apps and make sure that you have the correct environment.

Select tables and navigate to the respective table for which you want to enable Copilot capability.

Step 4: Here I am using OOB Account entity, you can choose whichever entity you wish to setup.

Step 5: Navigate to Properties for the Account table as below

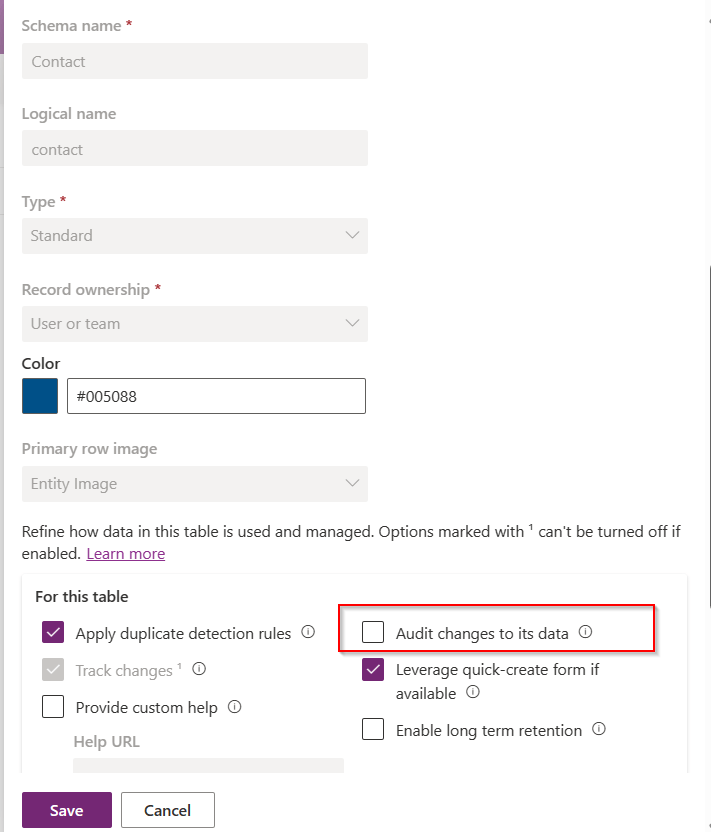

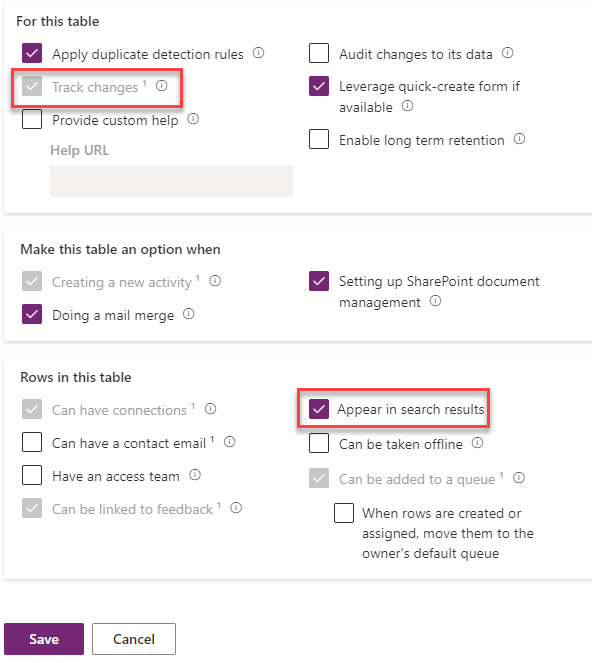

Step 6: Choose settings as highlighted below and click on save.

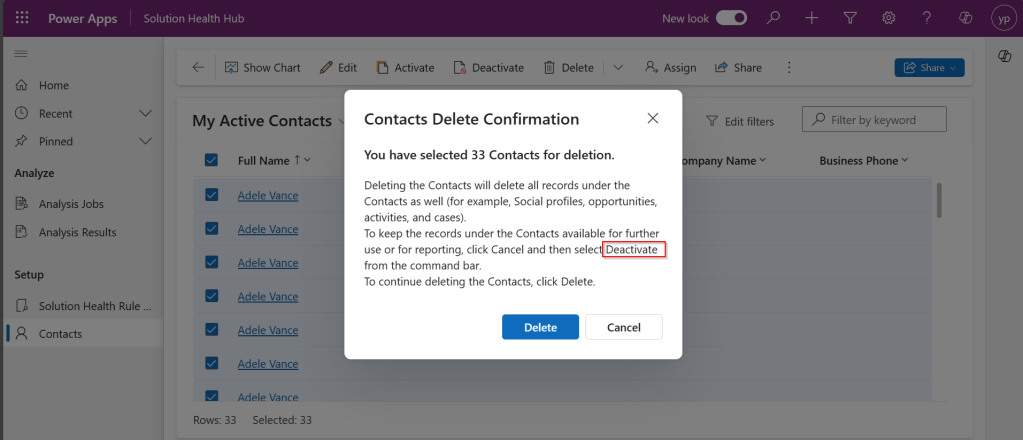

Step 8: Open the Account table and go views

Step 9: Here in this step, would need configure the Quick Find View, add the necessary fields to the view for it to be searchable for Copilot. Add in the fields which your users would be searching for in the Copilot.

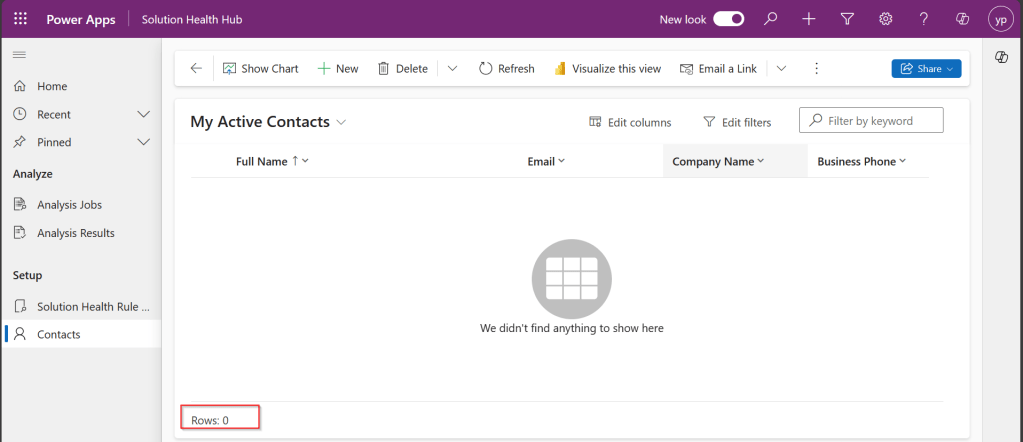

Step 10: Here we have to make sure the fields are added to the view and then save and publish.

That’s it, the configuration is done.

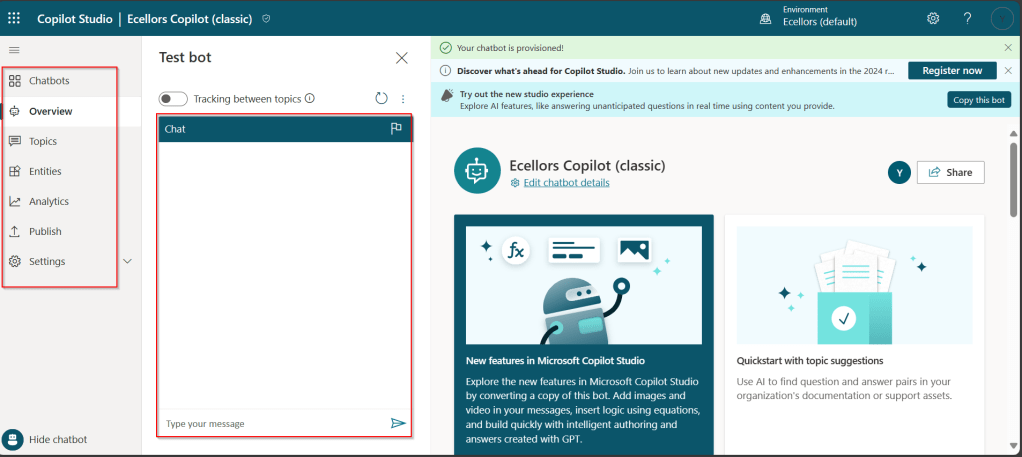

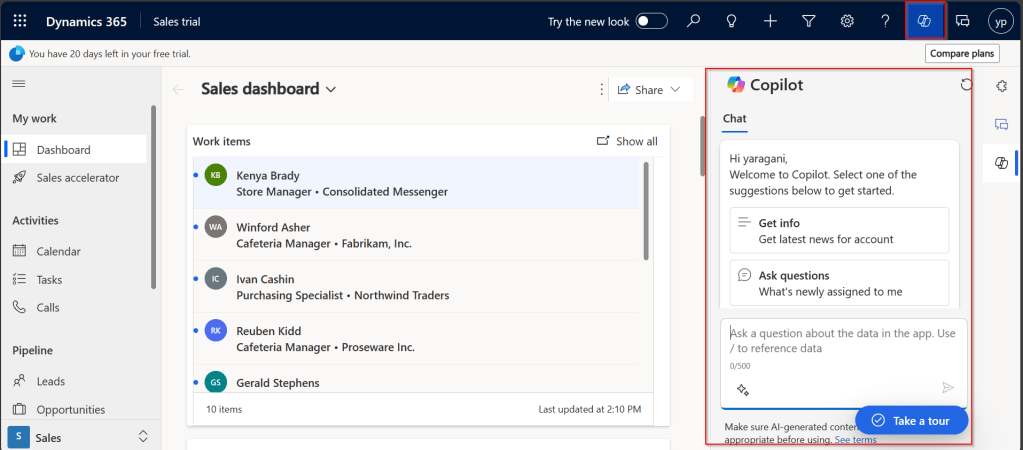

Step 11: In this step, we will test the Copilot by opening the App in which the configured entity is available. Click on the Copilot icon as highlighted below, this shows the Chat window for Copilot

Step 12:

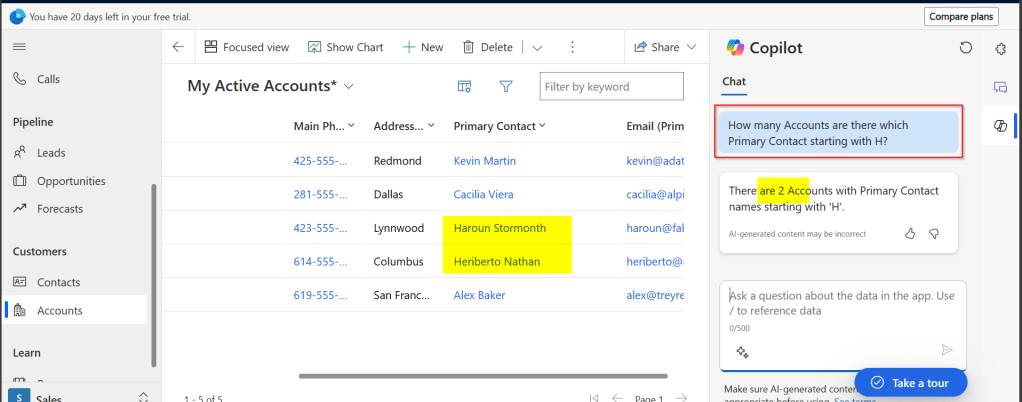

Test 1: Prompt: How many Accounts are there which Primary Contact starting with H? Well, it showed correctly as below.

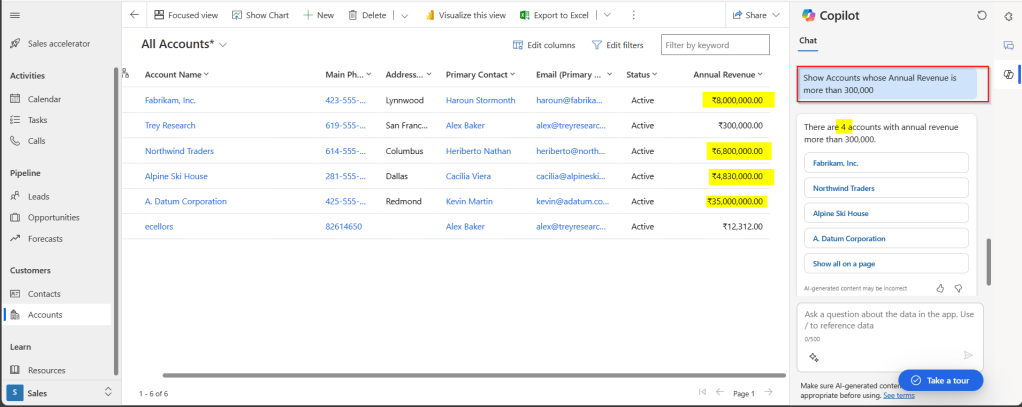

Test 2: Prompt: Show Accounts whose Annual Revenue is more than 300,000? It showed correctly as below.

Hope this helps you to setup Copilot for your Model Driven Apps. I will leave it to yourself to try this out.

Make sure, you give all the details in the prompt itself, it will not be able to store the previous response, meaning you can’t continue your conversation providing information in bits and pieces. You can setup the same for your Custom entity also, make sure you add the fields to the quick find view of that entity.

It is not recommended for Production environments as it is still a preview feature. In case, the response is not accurate, you can report this to Microsoft by hitting thumbs up or thumbs down and provide the relevant feedback.

Lot more to come in the upcoming days, learning different aspects of Copilot became a necessity these days.

That’s it for today…hope this helps…

Cheers,

PMDY